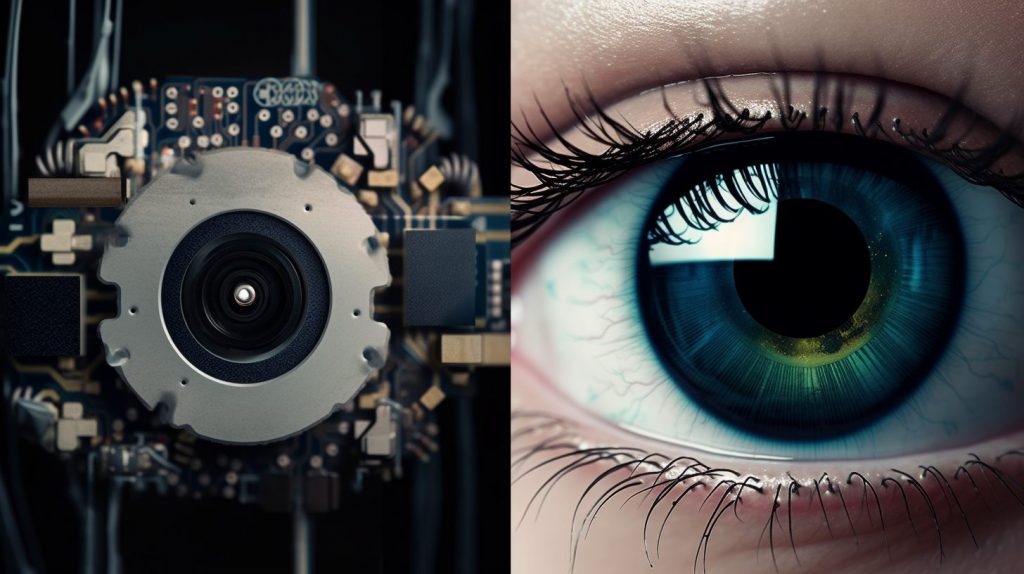

How artificial intelligence perceives the world through our eyes

About Prof. Dr. Hilde Kuehne

Prof. Dr Hilde Kuehne conducts research at Goethe University Frankfurt in the Department of Computer Science and at the MIT-IBM Watson AI Lab. She studied Computational Visualistics at the University of Koblenz-Landau and did her PhD at the Karlsruhe Institute of Technology.

Eyes and brains for the AI

Robots that work collaboratively with humans and always have the right tools at hand – what a human is capable of, a machine can also do in theory. To do this, it must understand human actions and be able to analyse movements.

Kuehne teaches the AI how to see. Action recognition began with the automatic classification and recognition of simple video sequences such as clapping or jumping. Today, Kuehne trains AI systems large neural networks that can automatically recognise and classify a wealth of human movements and activities. For her video database on human motion recognition, she was awarded the TPAMI Test-of-Time Award in 2021.

The challenge: Video data is complex. The same actions have different motion sequences and should still be reliably recognised by an AI. For Kuehne, the solution lies in multimodal learning, which includes text and audio in addition to video in training the data. This additional data helps the AI to become more autonomous – a process similar to human learning. The more human-like the understanding of the data becomes, the more realistic its informative value. This in turn improves the result of motion detection in the real world.

Kuehne relates the multimodal data to each other in a coordinate system. Movements in videos, spoken language and texts are sequenced, coded and end up in a “multimodal embedding space”. In this space, the data are sorted according to their semantic proximity. In this way, with increasing data, a universe of information is created that recognises movements and activities in the real world better, faster and increasingly independently without manual input.

For AI to be able to perceive the world in a similar way to a human, it needs large amounts of data, but recording and classifying them is time-consuming and expensive. Current models are trained with about one million videos – too few for Kuehne. She sees a problem in scaling: “Data on the internet only represent a part of the real world, not the whole world.” Videos on YouTube or Instagram are often similar in their visual language and the neural network cannot exploit its potential.

Kuehne sees one solution in synthetic data, with which the amount of data for AI training can be increased and varied almost without restriction. Because videos from the internet have an uneven gender distribution or do not sufficiently depict movements and activities that occur in the real world.

Interdisciplinary research takes AI further

In order to exploit the full potential of AI, Kuehne works together with scientists from other disciplines, such as linguistics. She is convinced: “Research is fundamentally becoming more collaborative. The days when every researcher had his or her own silo and did research exclusively in that silo are over.” That’s why she appreciates the hessian.AI.er network.

Kuehne integrates ideas from the vision, language, text and machine learning communities into her research: “The real value lies in the exchange between the different disciplines. For action recognition and multimodal learning, the connection of different modalities is indispensable: only in this way is it possible to integrate the different perspectives on the world into AI development.

The researcher therefore combines language and visuals with different levels of information so that AI systems function better and are useful for users: for example, assistance systems that recognise falls and support people in everyday life, or monitoring systems at underground stations that recognise people on the tracks and stop train traffic in time. AI is supposed to be the eye that humans lack in some places.